We develop embedded AI for connected devices and products

We train and deploy custom artificial intelligence models to run on devices, IoT products and embedded systems.

We turn sensor data and real behavior into useful models optimized to work inside the product, not only in a lab environment.

- clear requirements for Embedded AI / Edge AI

- architecture aligned with the product stage

- validation with real hardware and real use

- maintainable implementation for future iterations

This service is a good fit if your product needs real intelligence on the device

It is designed for electronic products, connected devices and technical systems that need to detect, classify, predict or make decisions locally.

Patterns and anomalies

Companies that want to detect abnormal behavior or relevant patterns in sensor data.

Smarter products

Startups and teams developing connected devices with AI capabilities applied to real usage.

Classification or prediction at the edge

Projects that need local inference so the system can respond without always depending on the cloud.

Local decisions in IoT

Systems that must act directly on the device, gateway or embedded equipment with sound technical criteria.

Devices with real constraints

Hardware where memory, power consumption, latency and compute capacity force the model to be optimized.

Complete integration

Teams that want to connect the model, sensors, firmware, connectivity and the product itself under one vision.

What we do at RobotUNO with AI embedded into the product

We structure the service in three layers so data, model and integration become a real solution instead of a demo disconnected from the device.

We define what the system needs to learn

We start from the use case and data quality, because embedded AI only makes sense when it solves a real product need.

- model objective and meaningful metric

- data capture, cleaning and structure

- technical criteria based on hardware and context

We train the AI and connect it to the product

We choose the right architecture, train the model and integrate it with hardware, firmware or backend so inference fits the product for real.

- architecture selection and training

- optimization for edge or the target system

- integration with sensors, firmware or platform

We take the AI out of the lab

We test the model under real conditions, adjust its behavior and iterate the solution so it keeps adding value when the system is operating.

- inference under real hardware constraints

- model validation in the context of use

- a foundation for retraining and improvement with new data

Adding AI to a product is not the same as connecting an API

Many projects talk about artificial intelligence, but very few solve what matters: how to train the model, how to integrate it into the product and how to make it run on real hardware with limited resources.

Data without a strategy

You have data, but not a clear plan to turn it into a useful model aligned with the real value of the product.

Classification or prediction on the device

You need to detect events, classify signals or predict behavior at the edge without an improvised approach.

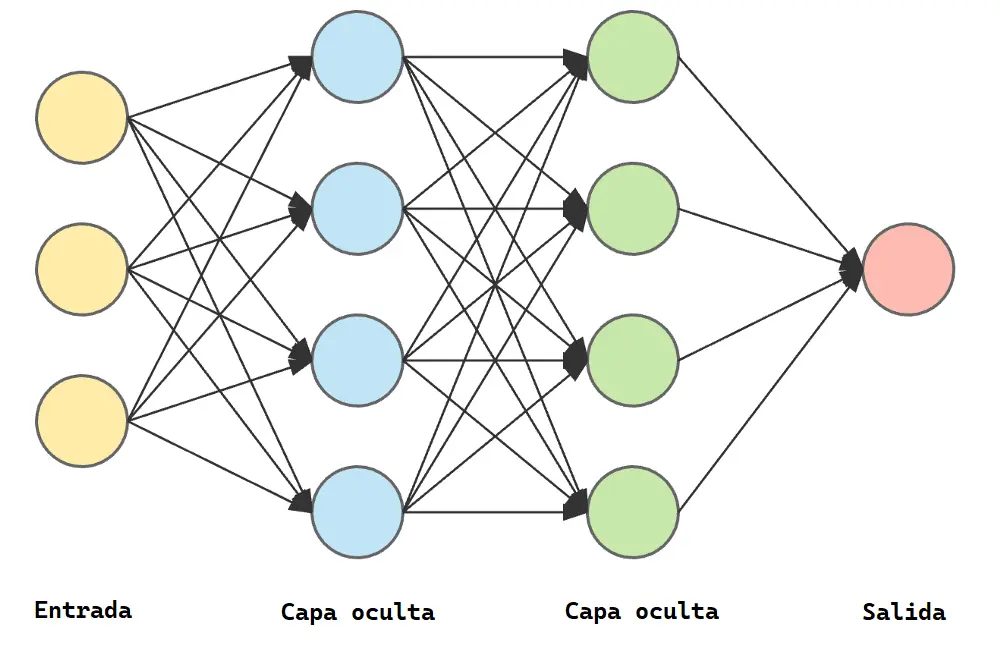

Unclear model architecture

It is not clear whether a CNN, RNN, LSTM/GRU, TCN, autoencoder or another approach fits the case best.

Real hardware limits

Inference must work within memory, power, CPU and latency constraints, not only in a demo.

Lab AI

You want useful AI inside the product, not a nice-looking experiment disconnected from real usage and the technical environment.

Fragmented integration

Sensors, firmware, connectivity and the model need to be coordinated so the full solution stays coherent.

We develop applied AI solutions integrated into the product

At RobotUNO we train, optimize and integrate custom models for devices and connected systems, with a focus on real execution and technical usefulness.

Definition of the use case

We define what the system must detect, classify or predict and what real value AI should bring to the product.

Data work

We analyze, clean, structure and prepare datasets from sensors, time series or other relevant sources.

Custom training

We train models adapted to the available data and expected behavior, avoiding generic solutions that do not fit.

Architecture selection

We choose the architecture that best fits the case: CNNs, RNNs, LSTM/GRU, TCNs, autoencoders or lighter models adapted to edge deployment.

Optimization for edge

We reduce complexity and tune inference so the model can run on real devices, gateways or embedded systems.

Integration with hardware and firmware

We bring the model into the product so sensors, firmware, connectivity and inference work in coordination.

Real validation

We test behavior outside the lab and adjust the system for real, sustained use.

Evolution of the model and the product

We leave a base ready for iteration, better datasets, retraining and long-term growth of the embedded intelligence.

What this service includes

Applied AI foundation

- analysis of the problem and the model goal

- evaluation of the available data type

- AI architecture approach

- data preparation, cleaning and structuring

- custom model training

Integration and evolution

- testing and evaluation

- optimization for edge or the target device

- integration with hardware, firmware or backend

- functional validation

- a base prepared for future evolution

Types of models and solutions we develop

We select and train the architecture that best fits the data, the device and the product goal instead of forcing a generic solution.

We move inference to the edge when the project needs it

In many products it makes more sense to process locally than to depend on the cloud all the time. Inference on the device reduces latency, improves autonomy, increases privacy and allows the system to act even without constant connectivity.

Low latency and immediate response

Useful when the system must react in real time and cannot wait for a constant round trip to external services.

Privacy and local processing

Suitable when sending all data outward is not desirable and the value lies in deciding inside the device itself.

Less dependence on connectivity

Important in remote devices, low-coverage environments or scenarios where the connection is not continuous.

Optimization for real hardware

We deploy models while taking memory, power consumption, CPU and real final-product behavior into account.

The point is not only to train a model, but to make it work inside the product and under real conditions of use.

How we develop embedded AI for a device

We define the model objective

We understand what the system must detect, classify or predict and how that should add value inside the product.

- alignment between model and product

- clear technical objective

- real value for the use case

We work on the data

We analyze the source, prepare the dataset and define the training and validation strategy according to the case.

- cleaning and structuring

- time series or event data

- evaluation strategy

We train the model

We choose the right architecture and train custom models while considering performance, stability and real usefulness.

- CNN, RNN, LSTM/GRU or TCN

- autoencoders and lightweight models

- performance and robustness

We optimize and integrate

We reduce complexity when needed, adapt inference to the hardware and integrate it with firmware, device or platform.

- optimization for edge

- integration with hardware

- system coherence

We validate in a real environment

We check behavior outside the lab, iterate when necessary and leave a base for future product evolution.

- real-use testing

- inference adjustments

- a base for future iterations

Why work with RobotUNO

Applied AI, not hype

We do not sell empty promises. We work on concrete use cases where intelligence brings a real improvement to the product.

Model, hardware and firmware in one solution

We integrate inference, electronics, sensors and connectivity under one technical direction.

Custom training

Models are designed according to the available data, the expected behavior and the constraints of the system.

Optimization for edge when required

We work with TinyML and Edge AI when the case needs low latency, local processing or operational autonomy.

Real technical criteria

We think about latency, memory, power consumption, stability and behavior outside the lab.

AI as part of the product

Intelligence is not left as an isolated layer. It becomes part of the complete system logic.

Real AI integrated into real devices

We develop applied AI solutions for hardware, integrating models, sensor data and decision logic into the system itself so the intelligence genuinely works in the product.

AI integrated into the device

We train and integrate models designed to run directly on real hardware, optimizing resources, response time and deployment viability in the product.

Useful models for signals and sensors

We work with time series, classification, detection and sensor-data analysis so the signal becomes useful decisions inside the system.

Edge AI with a practical mindset

We design lightweight, efficient solutions focused on real implementation, making sure the model can be integrated, maintained and scaled as the product evolves.

You can also explore our portfolio or contact us to discuss your project.

Does your product need real intelligence on the device?

If you want to detect patterns, classify signals or make edge decisions with a custom-trained model, we can help you turn that use case into a real technical solution.

Technologies we work with

We choose the right architecture, pipeline and deployment according to the type of data, the model goal and the hardware limitations.

Related services: Electronic product development, PCB design and manufacturing, IoT, Firmware and embedded systems, Android/iOS apps, MVP and Industrialization and mass manufacturing.

Frequently asked questions

Can you train custom AI models for a device?

Yes. We can start from the problem, the available data and the target hardware to train a model aligned with the product.

Do you work with sensor data and time series?

Yes. That is one of the most common scenarios when we work with devices, IoT systems and real-time measured behavior.

Can you deploy the model directly at the edge?

Yes. When the case requires it, we optimize inference so it can run on the device, gateway or embedded system.

What types of neural networks do you use?

We work with architectures such as CNNs, RNNs, LSTM/GRU, TCNs, autoencoders and lightweight models adapted to edge deployment when the case requires it.

Do you only handle the AI or also the hardware and integration?

We can address the model on its own if the technical base is already clear, but our main value is integrating AI, hardware, firmware and connectivity into one solution.

Can you help from the data phase to the final product?

Yes. We can work from dataset definition and training all the way to integration in the real system.

Can an NDA be signed?

Yes. If the project requires it, we can sign a confidentiality agreement before reviewing sensitive information.

What do you need to assess an Edge AI project?

It helps us to know the product, the goal of the model, the type of data available and the hardware or environment where it must run.

Let's talk about the AI your product needs

If you are developing a device, an IoT system or a connected product that needs embedded artificial intelligence, RobotUNO can help you train, optimize and integrate a custom model with sound technical criteria and product vision.